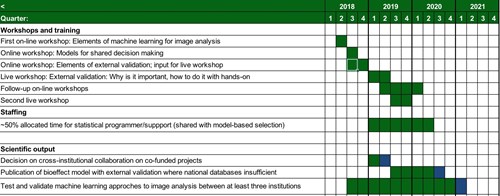

Biostatistics and modelling core

The purpose of Biostatistics and modeling core is to support and develop a network within modeling with particular focus on raising the level of biostatistical methods applied within the DCCC center and support cross-institutional validations of discoveries. A biostatistics expert will be connected to the effort, but field specific knowledge ensured by a medical physicist from our field with extensive biostatistical and statistical programming experience. Focus is on using the national collaboration to allow external validation of discoveries in any given Danish center and in particular modeling collaborations in areas where national databases are currently of insufficient detail will be considered a success.

Aim

Provide clinically credible, externally validated models of disease control and toxicity through the national network and provide methodological support and statistical oversight in high dimensionality data analysis.

Background

Wyatt and Altman have stated that the main reasons why doctors reject published prognostic models are lack of clinical credibility and lack of evidence that a prognostic model can support decisions about patient care[1]. More recently, the misapplication of high dimensionality data to clinical trials had severe consequences at Duke University (the A. Potti case). The objective of WP14 is to first to provide clinically credible, externally validated models of treatment outcome on disease control and toxicity through the national network and second to provide methodological support and statistical oversight in high dimensionality data analysis.

Methods

Analysis and validation of predictive/prognostic models with limited covariables per patient

We will provide a framework with senior statistical support with field-specific knowledge to aid appropriate statistical methods and data analytics to support analysis and in particular external validation of prognostic and predictive models for tumor control and toxicity.

Generate and publish interactive decision support models with absolute risk predictions to accommodate the inclusion of all relevant clinical modifiers of risk while maintaining utility. A special emphasis will be on quantification and visualization of uncertainties including those arising from observed differences in outcome between institutions. Methods to analyze and describe the burden of transient or fluctuating toxicities will also be a focus.

Within the framework, we will enforce open-source publication of all statistical source code (such as R markdown documents with appropriate supporting text) as a necessary part of ensuring reproducibility of published studies. This does not mean that raw clinical data needs to be published.

Analysis of high dimensionality datasets and machine learning

Within the economical limits of the core, we will provide an environment for support and discussion of appropriate analysis of high dimensionality datasets including those related to advanced image analysis and biomarkers.

Furthermore, we will aid in developing machine learning and pattern recognition methods in collaboration with computer scientists.

Collaboration on specific projects within the DCCC center

A limited budget from this work package is dedicated to supporting the external validation of models developed within member institutions. Special emphasis is on external validation by collaborating between institutional partners where the scope of possible existing national databases is expanded through institutional extensions through this network.

Possible examples of such collaborations include the following two examples, but the first year of the WP should ideally lead to further suggestions for collaborations:

1) External validation of a failure-site prediction model in locally advanced squamous or adenocarcinoma of the lung. Here, a failure site prediction model has been developed on an institutional database at Rigshospitalet and is in the press in the Journal of Thoracic Oncology [2]. Strong institutional databases, in particular project related databases in Odense and Århus, support external validation and expansion of the model. Attempts will be made to allow model validation by exporting models, not the databases, to alleviate data protection issues.

2) Another example of the TCP model for prostate cancer is available at AUH. In this TCP model, individual patient information is included to compute the probability of local control. In particular, diffusion coefficient maps acquired prior RT can be used to derive the cell density at the index volume, and estimate individual tutor control probabilities. This model has already shown an increment in dose differentiation across patients compared to classical approaches. Additionally, this model has been tested against the uncertainties derived from the inclusion of patient-specific biological information using functional imaging.

An example of the NTCP model for GI toxicity following RT for prostate cancer has been developed at AUH. This model accounts for spatial patterns in rectal dose distributions. By using a parameterized 2D map of the rectum doses authors have created an NTCP model predicting the risk of 6 different symptoms related to “Defecation Urgency”, “Fecal Incontinence” and “Emptying Difficulties”. Test it in an external cohort, such as DAPROCA would be a promising application of this WP.

The first year of the DCCC work package should encourage further suggestions for specific projects under this WP and the most promising (judged in particular on the ability to use the network for external validation and improvement of models) can receive financial support for data analysis.

Expected results

External validation of institutional prognostic/predictive studies, also in sites where national clinical databases are not currently of sufficient detail for radiotherapy modeling.

Workshops on statistical modeling with emphasis on data analysis.

Implementation of machine learning methods, such as convolutional neural nets, in collaboration with WP2.

Impact/Relevance/Ethics

Improved quality of related studies disseminating from the center.